Content Philosophy & AI Transparency

Why This Matters

Every piece of content on this site exists for one reason: to prepare you for the cybersecurity and digital forensics workforce. It is not here to check a box or fill a semester, but to make you employable and competent on Day One.

That commitment shapes every decision in how this material is built, from the learning objectives down to individual lab exercises. What follows is a transparent look at the philosophy behind this content and the role that AI plays (and doesn't play) in creating it.

The Foundation

This program and its courses were designed with the guidance of:

- Faculty subject matter expertise

- Industry partners

- National Initiative for Cybersecurity Education (NICE) Framework, developed by NIST

- Requirements to receive and maintain Center for Academic Excellence in Cyber Defense (CAE-CD) accreditation from the NSA

- Certification exam objectives (primarily CompTIA)

This is the foundation. Any significant course changes must go through a strict approval process and Course Curriculum Committee.

When a course aligns with an industry certification like CompTIA, those exam objectives become the course's North Star. All content is intended to prepare you to pass the industry certification while also giving you hands-on experience for the workforce. As CompTIA updates their exams every three years, we also strive to ensure course material is updated to cover newly added exam objectives and emerging technologies.

Beyond certifications, industry partners point us toward specific standards and frameworks they want potential employees to know. This often ties back to guidelines from NIST, ISO, OWASP, and others. These become the authoritative sources of truth for course content.

On top of all of that, I layer in my own industry knowledge and experience to make sure what you are learning is optimal and relevant. From my time working with MGM Studios and Amazon, my work spanned multiple domains:

- Security Operations (IAM, email security, security awareness training, incident response, SIEM log analysis)

- Cloud Security Engineering (deploying and integrating security tools, cloud compliance and auditing)

- Digital Forensics and eDiscovery

- GRC (policy writing, documentation, third-party risk assessments)

Where AI Fits In

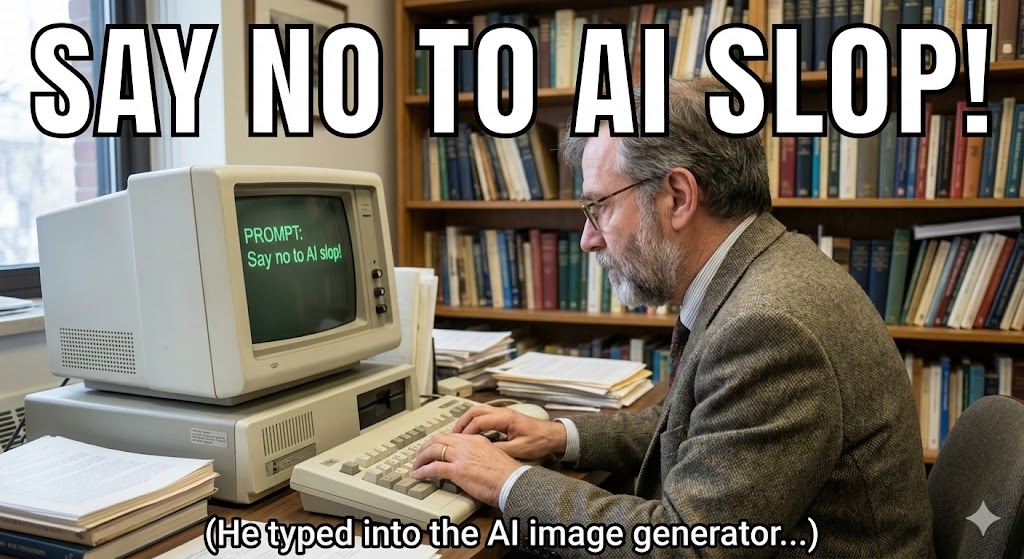

As someone who feels like they are developing a strong allergic reaction to "AI slop", let me be direct about how AI is used here.

I do utilize Generative AI tools to assist me with content creation and refinement. Here's how they fit into the workflow:

You know what they say: Garbage In, Garbage Out.

I approach content creation at the weekly/module/chapter level based on the overall learning objectives of the class. I create highly detailed prompts around main topics and sub-topics, with notes about what specific concepts to include. Think of it like a detailed study guide for the chapter. AI helps me put the information together more coherently and in a professional "technical text book" style. Where it really shines is the ability to make connections to previous chapters and "connecting the dots" which is so important in cybersecurity.

This process goes through many iterations until I have a solid starting point of information. "One and done" prompts in generative AI, in my opinion, is what leads to boring, lifeless AI slop.

From there:

- I export the drafted information in markdown format (.md) and add to Visual Studio Code, where I manage my eBook local repository.

- I edit and fully validate that the information is correct, factual, and relevant.

- I fill in any gaps that are missing.

- I locate relevant visual images that are publicly available (with sources) or create my own where possible (often with Gemini's Nano Banana image creation).

- Once everything is good, I "push" that to my GitHub account which runs MkDocs, and displays everything to you in this document repository.

Note

I do plan on putting together a workshop sometime to showcase how you can build your own MkDocs documentation site! It would be a great way to showcase your projects, resume, etc. for potential employers to view. If you are curious, this is a great tutorial that already exists: https://youtu.be/xlABhbnNrfI.

What AI Does NOT Do

To be clear about the boundaries:

- AI does not set learning objectives. Curriculum design is driven by faculty expertise, industry input, NSA CAE-CD requirements, and certification standards.

- AI does not determine what is technically accurate. Every claim, command, and concept is validated against authoritative sources and my own professional experience. AI-generated content is a starting draft, not a finished product.

- AI does not replace the feedback loop. Student performance, questions, and confusion are what drive revisions.

Continuous Improvement

Based on student performance and feedback, I continuously identify areas that need more clarification. This could include rewriting text, adding clearer examples or case studies, or building additional interactive activities and hands-on technical labs. This site is living documentation — it evolves alongside the field and alongside the students who use it.

Note

Fun Fact: AI was NOT used in the writing of this page :) Except for creating the very meta meme.